When Building Software Is Faster Than Doing Your Job, and why Matt Shumer is only half right.

AnonLQ

1mo ago (edited)

Forewarning - the below is detailed and somewhat technical. Only read on if you want to understand how to preserve your career. I expect critique, open debate about the future of knowledge work is the best discussion we can have right now.

Imagine it is 9:00 AM. You hold the pen on a critical legal filing due to the court at 4:00 PM, and your screen is a digital war zone.

Four reviewers have just come back simultaneously. Counsel has completely rewritten key paragraphs using track changes. The partner has left contradictory comment bubbles overlapping counsel’s rewrites. The client just called to embargo specific material for privilege reasons—an instruction that exists only as a frantic scribble on your legal pad. A junior associate just emailed a list of cross-referencing corrections.

If you’ve ever worked in law, finance, or consulting, you know exactly what happens next. You open Microsoft Word, attempt to use the "Combine" function, watch it completely mangle your complex formatting, and resign yourself to the manual grind. You open five windows side-by-side, alt-tabbing until your eyes bleed, trying to hold a four-dimensional jigsaw puzzle in your head while synthesizing the chaos paragraph by paragraph.

But imagine that while sipping your morning coffee, you had just read Matt Shumer’s viral Fortune article, "Something Big is Happening in AI". He warns of a "February 2020 moment," describing a new phenomenon called vibe coding, where you simply tell an AI what you want, and it writes the software for you.

Inspired by the premise, you make a radical, seemingly unhinged decision. Instead of brewing more coffee and suffering through the manual alt-tab grind, you close Word. You open an AI coding editor. You explain the exact mess in front of you and architect a bespoke piece of software to parse, collate, and organize the conflicting feedback.

By 11:00 AM, the software is live. You feed your documents into the app, it instantly processes the chaos into a clean, auditable checklist, and you easily hit your 4:00 PM deadline. You've completed the work using a custom application that did not even exist when you woke up.

The Epiphany: Just-In-Time Software

Here is the mind-bending reality: That hypothetical is no longer science fiction. It is the exact realization that hit me the morning after I survived that very 4:00 PM deadline.

Unfortunately, this time I didn't actually build the tool before my deadline. I lived through that exact nightmare scenario on a Tuesday, and like everyone else, I synthesized the documents the old-fashioned, agonizing way.

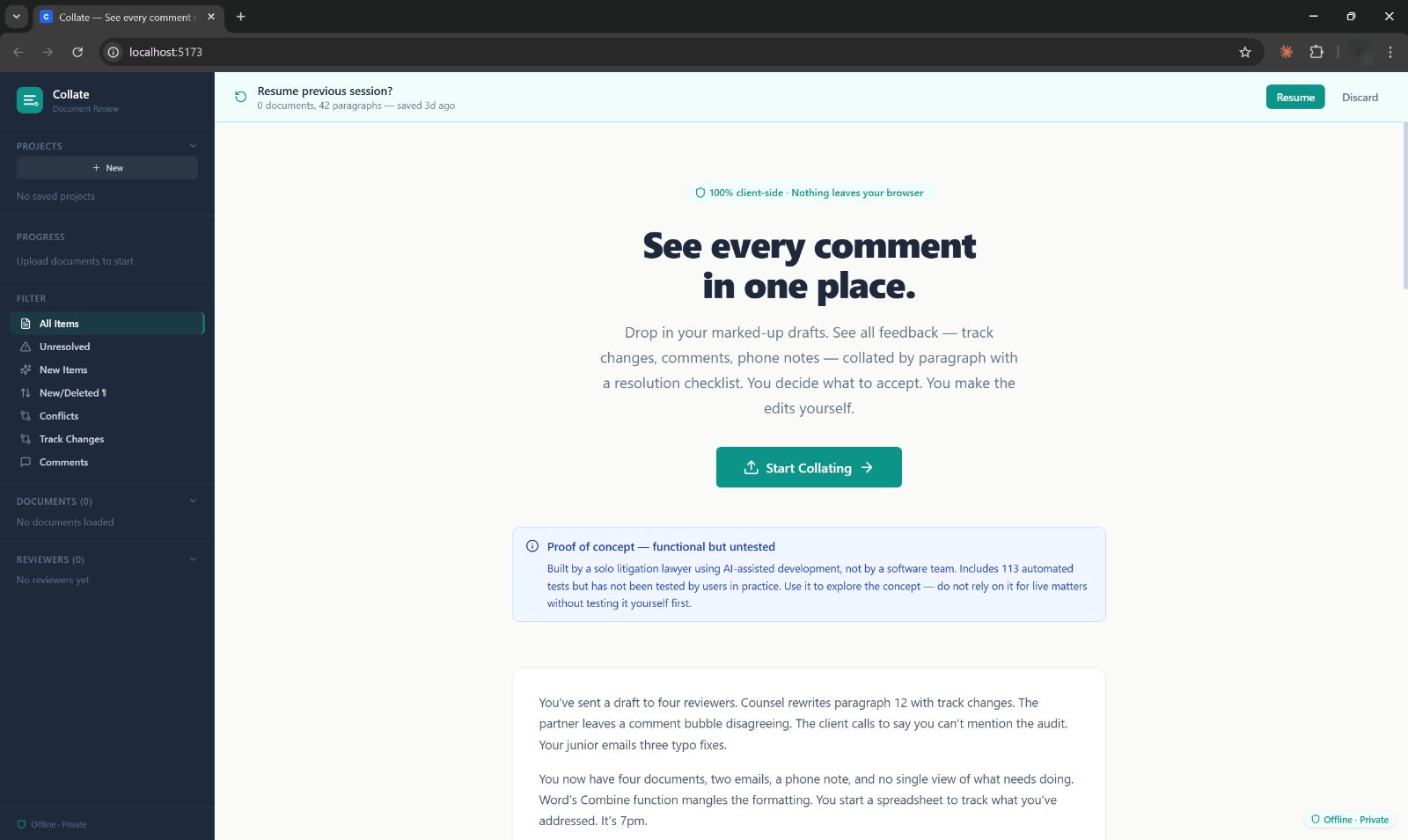

It was early the next morning, still furious at the sheer friction of the overhead, that I opened an AI coding environment. I am a litigator. I have zero background as a developer and have never formally learned to code. Despite this, I built a bespoke, highly secure, local-only browser-based application called Collate from absolute scratch. I finished it in 90 minutes, before I had even eaten breakfast.

As the final code compiled, a profound realization hit me: I should have built this yesterday.

Inventing, coding, and deploying a brand-new software platform would have genuinely been faster than doing the work manually the day before.

Compressing my workflow into a custom app on the fly wouldn't just have been a neat procrastination trick; it would have been the most ruthlessly efficient, rational way to complete the task. The Legal Quant way.

As vibe coding tools get exponentially faster, software development is ceasing to be a multi-month capital expenditure. It is becoming a reflex. I and those like me have fresh ideas for useful legal software every day.

We are entering the era of "just-in-time" software. Professionals will no longer tolerate broken, archaic workflows. Instead, the exact moment a problem occurs (and the associated insight of the systems thinker strikes) experts will construct bespoke tools on the fly. We will build disposable apps for single transactions, or permanent platforms for niche workflows, exactly when we need them.

The "Je Ne Sais Quoi" of the New Builder

If I had tried to build Collate the traditional way, it would have required a team of four developers, taken three months, and cost up to £150,000 (feel free to correct me here, please).

Why? Because the dominant cost of software isn’t writing the code. It’s knowledge transfer. It is the agonizing process of trying to get a software engineer to understand why an auto-merge feature is legally dangerous, or why a wholly deleted paragraph must still remain visible on the screen for compliance, and a hundred other micro decisions that a lawyer, as the end user, instinctively understands.

With vibe coding, that £150,000 translation tax is dead. My cost was a £20 AI subscription and 90 minutes. There was no translation loss because the person who understood the problem and the person writing the code were the exact same person.

But let’s be clear: you can’t just yell at a computer and get good software.

If the syntax is now practically free, what differentiates a brilliant builder from someone who churns out useless digital garbage? As it becomes regular practice for experts to build tools on the fly, I’m starting to realize that success relies on a certain magic sauce that has absolutely nothing to do with computer science.

I think its is a cocktail of four distinct traits:

1. Workflow Rebellion: A fundamental willingness to question traditional workflows. You must possess the audacity to look at a 20-year-old process, refuse to accept that "this is just how things are done," and ask: "How should this actually work?"

2. Inventiveness (Systems Thinking): The imagination to look at a chaotic mess of emails and Word documents and instantly map the underlying logic tree and data structure in your head.

3. Surgical Communication: The precision to ruthlessly articulate that logic and dictate strict boundaries to a frontier AI model.

4. Professional Taste: The judgment to know exactly when a tool needs to step back and let the human make the final call.

Where Matt Shumer Got It Wrong

In his article, Shumer’s message to the general public is a warning: AI is coming for knowledge work, and it’s going to do it on autopilot. He describes telling an AI what he wants, walking away for four hours, and coming back to find the work done.

I love this article and it has caused something to breach the public consciousness that many of us have known for months if not years. However, From the trenches of a high-stakes legal practice, Shumer is only half-right.

The real revolution isn’t about humans walking away while AI replaces them. It’s about AI handing domain experts the keys to the kingdom. During my 90-minute build, I didn't walk away. I drove. Over a 30-minute design conversation with the AI (Claude Opus), I had to veto the machine repeatedly using 15 years of litigation experience:

Trust: The AI wanted to use a cloud backend; I knew a compliance officer would never allow privileged documents on a random server, so I forced a local, in-browser architecture (Rust compiled to WebAssembly) that structurally prevents outbound network requests.

Workflow Realities: The AI wanted to prioritize comment bubbles. I knew that substantive legal feedback overwhelmingly arrives as track changes, forcing the AI to build a complex parser for an inline diff view.

The Philosophy: The AI naturally wanted to build an "autopilot" that automatically merged documents for me; I explicitly demanded a "checklist" philosophy where the human is forced to explicitly resolve every single item.

The AI can write the code brilliantly. But it cannot generate the philosophical guardrails of your profession.

I am a member of Legal Quants, a community of over 80 lawyers (and growing rapidly) across 17 jurisdictions who build software as a core part of how we work. We all have the deep expertise to know exactly what needs to be built.

Matt Shumer thinks AI is a threat to the profession. I think he is only half right, and I'm optimistic about the longevity of my own legal practice. When the experts stop waiting for tech companies to build their tools and start building them themselves, it isn't a threat. It’s the profession levelling up.

---

Appendix: The 90-Minute Blueprint (For the Builders)

If you want to know exactly how a non-developer builds a secure, local-only application before breakfast, here is the blueprint. There is no magic prompting language. The AI executes brilliantly because the problem was defined with extreme specificity by someone who had just lived through the pain.

Phase 1: The Design Conversation (Night 1)

Scope discipline is the single most important factor in AI-assisted development, and it happens before the code editor is even opened.

Irritated by the overhead of my 4:00 PM deadline, I spent 30 minutes that evening in a plain-English back-and-forth with Claude Opus 4.6. I described the broken workflows. Claude helped structure them into a technical architecture. By the end of this chat, Claude produced a 600-line master prompt detailing the file structure, Rust types, XML parsing logic, React component layouts, and an explicit list of "Things NOT to Build."

Phase 2: The Build (Morning 2)

The next morning, armed with the master spec, I opened Cursor (an AI coding editor). The session ran as a single continuous conversation for about 90 minutes. I did not write or edit any code directly. I steered the AI through eight main prompts:

1. The Spec: I fed Cursor the 600-line master prompt. It built the entire Rust core and scaffolded the React frontend. First attempt. Zero type errors. It still boggles my mind.

2. The Standard of Taste: I pointed the AI to a separate, highly polished tool I had previously built and simply said, "Match this." The AI rebuilt Collate’s entire frontend to match the reference standard. (Takeaway: The AI produces exactly to the ceiling of taste you set for it, no higher).

3. The Edge Cases: I threw workflow curveballs at the AI based on lived experience: "What happens when counsel deletes an entire paragraph? What if a new version arrives mid-review?" The AI had no way to anticipate these. It adapted, designing a paragraph classification system in Rust to handle wholesale insertions/deletions and preserve existing decisions when new versions arrive mid-flight.

4. Concept Alignment: I reinforced the "Checklist, not autopilot" directive. Cursor refactored the entire app to remove any auto-merge capabilities, ensuring explicit human resolution and auditable exports. It also wrote the UI copy explaining this "advisory, not executive" principle to users.

5. Testing: I insisted on realistic, messy legal content for testing: overlapping comments, track changes within track changes, and phone notes attributed to specific reviewers. The AI expanded the test suite to 113 rigorous tests (47 Rust, 66 Vitest).

6. Operations & State: We configured project management features, auto-save, and a Windows launcher. A senior associate has multiple live matters; if a tool doesn’t preserve state per matter, it’s just a toy.

7. Bug Diagnosis: A silent failure occurred on launch. I didn't debug it. I simply copied the error logs, pasted them back into Cursor, and the AI diagnosed and fixed the issue instantly (The AI didn't know it was broken until I tested it in a real-world environment).

8. UX & Ergonomics: We implemented a persistent sidebar and named project saves. This was driven purely by how a lawyer actually uses a second monitor during a drafting session, practical ergonomics over aesthetics.

The Result: A permanently deployed tool that processes documents locally, scales infinitely, and ensures I will never have to alt-tab through conflicting track changes at 3:00 PM ever again.

Tomorrow, I'll build another tool.